- Blog

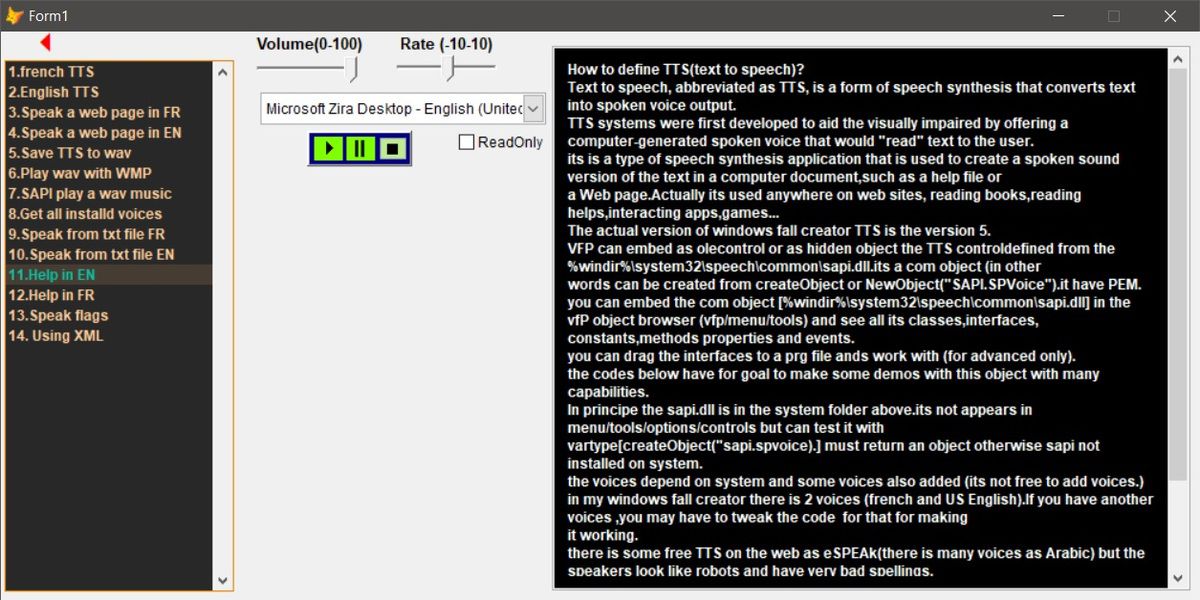

- Streaming microsoft tts voices

- Outlast 2 jane doe

- Metal slug apk mod offline

- Subnautica below zero update

- Arduino 1-8-5 serial buffer setting

- 123movies game of thrones season 7 episode 2

- Golmaal again subtitles english

- Metropolis ark 1 instruments

- Play mount and blade napoleonic wars for free with no download

- Tell which version of gta 4

- #Streaming microsoft tts voices install#

- #Streaming microsoft tts voices verification#

- #Streaming microsoft tts voices code#

It has another rival in Amazon, which recently launched a service - Brand Voice - that taps AI to generate custom spokespeople and offers a number of voice styles and emotion styles through Amazon Polly, Amazon’s cloud offering that converts text into speech.ĪT&T has used Custom Neural Voice to create a Bugs Bunny soundalike at a retail location in Dallas from around 2,000 phrases and lines supplied by a voice actor. Microsoft is effectively going toe to toe with Google, which in 2019 debuted new AI-synthesized WaveNet voices and standard voices in its Cloud Text-to-Speech service. Microsoft says it’s also working on a way to embed a digital watermark within a synthetic voice to indicate that the content was created with a Custom Neural Voice. “When it’s not immediately obvious in context, explicitly disclose it’s synthetic in a way that’s perceivable by users and not buried in terms.” “We require customers to make very clear it’s a synthetic voice,” Sarah Bird, responsible AI lead for Cognitive Services within Azure AI, said in a statement.

#Streaming microsoft tts voices code#

Microsoft also contractually requires customers to get consent from voice talent.īeyond this, Microsoft says it reviews each potential use case and has customers agree to a code of conduct before they can begin using Custom Neural Voice.

#Streaming microsoft tts voices verification#

The recording is compared with the model training data using speaker verification to make sure the voices match before a customer can begin creating the voice.

When a customer submits a recording, the voice actor makes a statement acknowledging that they (1) understand the technology and (2) are aware the customer is having a voice made. Ĭustom Neural Voice includes controls to help prevent misuse of the service, according to Microsoft. Microsoft claims that because the models can simultaneously predict the right prosody and synthesize a voice, Custom Neural Voice results in more natural-sounding voices. One set of models converts a script into an acoustic sequence, predicting prosody, while another set of models converts that acoustic sequence into speech. From there, you'll have access to a read aloud menu bar where you can choose different voices and the speed at which they speak.With Custom Neural Voice, prosody - the tone and duration of each phoneme, the unit of sound that distinguishes one word from another - is combined so machine learning models running in Azure can closely reproduce an actor’s voice or a wholly original voice. You can try the new voices out for ourself by selecting text from a webpage, right clicking, and selecting the "read aloud" option from the context menu. The new voices are labeled as "Microsoft Online," Microsoft says. You can distinguish these voices from the others by their names. Voices with "24kbps" in their title will sound clearer compared to other standard voices due to their improved audio bitrate.

#Streaming microsoft tts voices install#

Responding to feedback that the current voices sound too robotic and it was onerous to install language packs to read other languages, the new voices are powered by deep neural networks in the cloud. Microsoft today launched a new set of voices in the Microsoft Edge Dev and Canary channels that should make the "read aloud" feature sound much more natural.